Why searching past alerts tells you little about a platform’s ability to detect and stop attacks in real time. And what to do instead.

There's a ritual that plays out in almost every serious security evaluation in Web3.

A prospect logs into a vendor's platform. They search historical alerts. They find entries tied to known hacks. The alerts are there, timestamped, labeled. The system looks comprehensive. The demo goes well.

What they just evaluated is a filing cabinet.

In onchain finance, the difference between seeing an alert in a database and receiving an alert in real time can be the difference between stopping an attack and documenting a loss.

Evaluating security systems by browsing their alert history has three fundamental problems.

You can't see lead time. The only thing that matters in a real attack is how many minutes, or sometimes seconds, you have to respond before funds move. A real detection fires before the first transaction confirms. A reconstructed log entry tells you nothing about lead time. It tells you the incident happened. Not that anyone saw it coming.

You can't see false positives. An alert log that looks comprehensive might be generating dozens of low-quality alerts per day, noise that trains teams to ignore their own systems. False positive rate is arguably the most important signal in evaluating a detection platform. You cannot assess it by looking at a curated incident library. You can only assess it by running the system live, in production, over time.

You can't verify causation. Seeing an alert in a system doesn't mean the system caused anything. The absence of counterfactual (what would have happened without that alert) is the entire problem. Was the protocol paused because of the alert? Did funds actually stay safe? Or did the incident resolve itself and the alert get added retroactively to make the coverage map look better?

The gap between "we have an alert for that" and "we detected that in real time and prevented loss" is enormous. And right now, too many evaluations treat those two things as the same.

There are three ways to evaluate a security platform that actually tell you something.

Live credibility = public, verifiable outcomes. The gold standard is cases where a vendor's detection demonstrably resulted in funds being saved, with the customer on record saying so. A protocol team saying: we got an alert, we paused, here's the transaction hash, here's what would have happened otherwise. This kind of evidence is rare. It should be the first thing you ask for.

A useful inverse test: did a protocol that uses a given vendor get hacked anyway? A miss is at least as informative as a save. If a system failed silently (no alert, no action) that's the most important thing to know about that system.

Parallel monitoring = run it yourself. Configure the same scope across multiple systems simultaneously and monitor for a meaningful period. You'll see detection speed, alert volume, false positive rate, and coverage gaps in direct comparison. This takes time, but it removes all ambiguity. You're not comparing marketing claims. You're comparing what each system actually does on your contracts, in your environment.

Structured benchmarking with a defined scope. If you can't run parallel monitoring, define a fixed set of incidents and ask each vendor to demonstrate detection against the same conditions. Not historical alerts from their archive, but live simulation or methodology walk-through against the specific attack surface. Look for how each system approaches detection: ML models, simulation, graph analysis. The architecture tells you what's possible at the edge cases. The receipts tell you what actually happened.

We've been in enough evaluations to know that talking about this doesn't move people. Evidence does.

Below are six cases where Hypernative detected a real attack, in real time, with documented outcomes. Each one has a timestamp. Most have a customer on record. Two of them involve protocols that weren't our customers at all.

SparkDEX: How the Attacker Lost Money

On Aug. 7, a would-be exploiter attempted to drain $1.5M from SparkDEX on the Flare chain. Hypernative flagged the attacker's contract deployment at 3:56 AM UTC, before the first exploit transaction hit. The SparkDEX team was alerted and paused the perpetuals module within minutes. When the dust cleared, the attacker had lost $85K of their own funds, deposited for a follow-on attack that never landed.

It may be the first documented case of an exploiter losing money in a DeFi attack. That outcome required a detection, a trusted alert, and a team that moved fast enough to act on it.

Read more: How SparkDEX Saved $1.5M With Automated Detection of a Failed Exploit

Kinetic: $5M Saved on a Saturday Morning

On Oct. 31, Hypernative detected a flash loan and permission vulnerability exploit targeting Kinetic Market, the premier lending protocol on the Flare Network. The platform identified the attack using out-of-the-box detection settings available to all customers. Kinetic paused the market shortly after the alert fired, limiting losses to a fraction of what was at risk and ensuring all affected users were made whole.

What an audit couldn't have caught (this was an economic attack, not a code flaw) a real-time detection did.

Read more: How Kinetic Stopped a Hack and Saved $5M With Hypernative

Olympus: 3 Minutes, $180M Protected

On Sept. 21, 2024, Hypernative detected unusual activity on an Olympus DAO utility contract and notified the team within 3 minutes early on a Saturday morning. An exploit vector in the Cooler Consolidation Contract gave an attacker the ability to drain DAI and gOHM balances up to each user's approval amount. Thanks to the speed of detection and response, damage was contained to $29K against a treasury of $180M.

The Olympus team has since moved to automate their pause function, so the next response doesn't require anyone to wake up at all.

Read more: Beating the Hack: How a Timely Alert Helped Olympus Save User Funds

Reserve: The Governance Attack Nobody Saw Coming

In the early hours of Feb. 4, an attacker submitted a malicious governance proposal targeting hyUSD, a dormant decentralized token folio on the Reserve platform. The proposal called for upgrading two core contracts to an unverified address, no forum discussion, no warning. The attacker's bet was simple: a deprecated asset attracts minimal attention, and a malicious proposal can pass quietly before anyone organizes a response.

Hypernative flagged the proposal the moment it appeared. ABC Labs confirmed the threat, alerted the community via Telegram, and RSR holders mobilized fast enough to outweigh the attacker's voting power. Within 24 hours, the attacker had unstaked and walked away empty-handed.

Read more: How Reserve Secures a DTF Ecosystem Built on Decentralized Control

Venus: The Alert That Went to a Non-Customer

On Sept. 2, 2025, a Venus protocol user with a large position was compromised in a phishing attack, tricked into approving a malicious contract as a delegate, which drained $13M in a single multi-step transaction. Hypernative's systems had flagged the attacker's contract as suspicious the day before. When the exploit executed, Hypernative's CTO reached out to the Venus team via Telegram within less than 2 minutes of the first loss.

Venus was not a Hypernative customer. There was no contract, no onboarding, no commercial relationship. The alert fired anyway. Venus paused the protocol, executed a liquidation strategy that seized $3M of the attacker's collateral, and recovered nearly $13M in user assets.

Read more: How Venus Saved ~$13M in a Phishing Attack After Hypernative's Detection

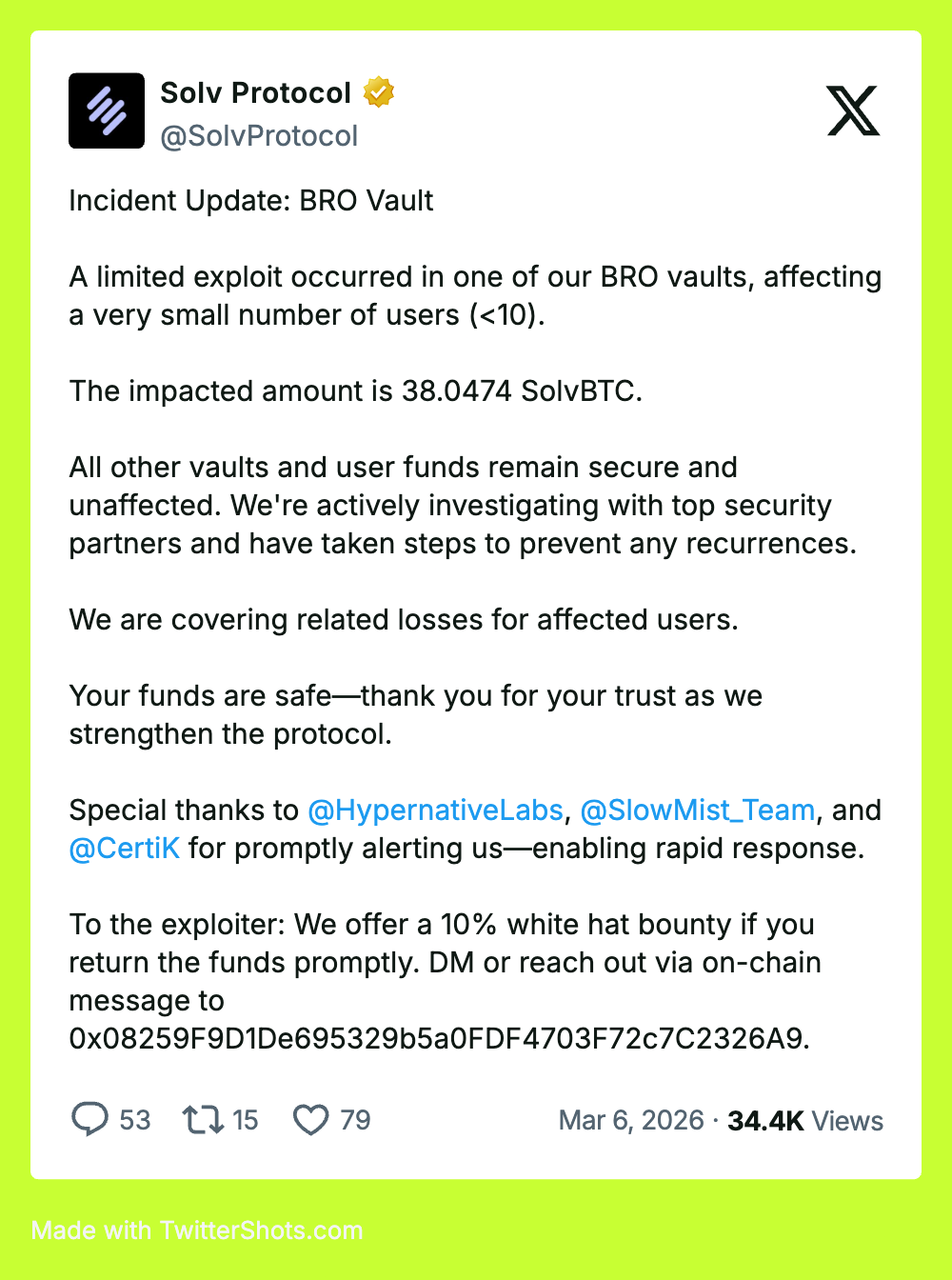

Solv Protocol: $10M Protected in a Non-Customer Detection

On March 5, 2026, Hypernative detected an active exploit against Solv Protocol's BRO-SOLV contract on Ethereum and immediately notified the team, despite Solv not being a customer. The attacker had already drained approximately $2.7M by exploiting a double-minting vulnerability, but at the time of the alert, roughly $10M remained accessible across additional Solv contracts. Rapid detection and response closed that window before the attacker could move to the next stage.

Hackers rely on dwell time. Multi-step attacks, staged withdrawals, cross-contract drains — these are features of how exploits work at scale, not edge cases. Real-time monitoring is what collapses that window.

Read more: How Hypernative's Alert Saved $10M in the Solv Protocol Exploit

Alchemix: Automated Response, No Human Required

When the DolaSavings contract on Ethereum was exploited on March 2, 2026, Alchemix's pools were in the direct path. The attacker used a large flash loan to manipulate a pricing mechanism and drain collateral positions: a sophisticated, fast-moving attack that left little time to respond manually. Hypernative detected the attacker's preparation phase before execution and triggered Alchemix's pre-configured automated response, disabling token exposure across all markets without a single manual action.

The exploit cost DolaSavings lenders approximately $240K. Alchemix came through without impact. The defense that worked wasn't a faster human, it was a system already configured to act before anyone picked up the phone.

Read more: How Alchemix Responded to the DolaSavings Exploit Before It Happened

The goal of security isn't better documentation of attacks. It's premonition. Knowing what's going to happen before it does and having a system that acts on that signal while there's still time. Post-mortems tell you what happened. Monitoring tells you what's happening. Real detection, in production, tells you what's coming. That's the only version of security worth buying.

Reach out for a demo of Hypernative’s solutions, tune into Hypernative’s blog and our social channels to keep up with the latest on cybersecurity in Web3.

Secure everything you build, run and own in Web3 with Hypernative.

Website | X (Twitter) | LinkedIn